Putting your version on GitHub sounds like great idea.

Guess that would be a fork.

But @n_ermosh did post a few months ago …. Maybe he would take pull request. If can figure that out…

Yeah was waiting to see if he's lurking about. Might have to figure out GitHub again - been ages since I pushed stuff there.

BTW am now adding AUGD and AUGS constant value printing (where consumed) so we can see the full 32 bit values after augmenting. We can't show the full AUG values in the AUGD/AUGS statements themselves because the instruction that consumes it follows later and has not been processed yet. I also still want to show the original unaugmented value so this code can be re-assembled if you generate it without the addresses and that's why the final augmented value is shown only after the comment starts. Hopefully we can ultimately lookup any matching symbol names at these address values too. That can help to figure out some data variable accesses when memory is read or written there.

Update: there is just one more missing item that the decoding of AUGS/AUGD stuff will also assist. The special augmented and unaugmented hub memory accesses using PTRx pointer index operations like: mov r0, ptra[##$2A332] and mov r0, ptra[123]

Right now these are not yet handled at all, either with augmented constants or without. I should add these which I think will complete the P2LLVM parser to accept all standard P2 instructions and aliases, although compile time expressions for calculating constants may still be limited - I've not looked into that.

The special SPIN2 language control directives like ORGH, FIT, RES etc won't be implemented though, as this assembler already uses its own (primarily GNU GAS compatible) directives.

@rogloh,

To put your changes up and make a pull request would mean you have to fork a copy, do a pull request against that copy, do the submodule updates, copy the changes in, do a push. That would make a version that we could pull down and test.

@iseries said:

@rogloh,

To put your changes up and make a pull request would mean you have to fork a copy, do a pull request against that copy, do the submodule updates, copy the changes in, do a push. That would make a version that we could pull down and test.

Mike

Thanks @iseries for the refresher. I'll probably do exactly that in the end. I just want to complete the last part to parse which is the pointer indexing stuff. Then I should try the pull request. One good thing is that nothing seems to have changed since I dragged my tree down in the first place so no additional merging needs to be done at this stage.

@rogloh glad thinking about putting on GitHub later…

Would be nice to have build instructions for Linux if possible. Guess my problem might be that Linux people are so used building stuff they don’t need much help whereas I’m mostly lost…

Also, don’t know if it can work here but some GitHub stuff appears to be automagically compiled be them if set up (?)

@Rayman said:

@rogloh Suppose if spin2cpp still works, then can add spin2 drivers to c/c++ code right?

Potentially, although you'd need to worry about memory layout and startup code etc. Not sure they'll be fully compatible at that level without changes in how you structure and launch the bundled application code. But it's a compelling solution if we could use both toolchains together, particularly for something like MicroPython which could make use of other SPIN2 based objects converted into C code.

Got a bit further on this and tested all the opcodes for the new P2 instructions I'd recently added to P2LLVM by comparing all the different possible {#}D/{#}S forms with the binary output that flexspin generates. This is the list:

Found a typo and fixed the SETDACS opcode before it became buried and would cause problems later. But one error wasn't too bad given this was a manual process typing it all in.

I did find a couple of things with the P2LLVM output that worked differently when I assembled the same file using flexspin and compared the outputs.

1) Relative branch distances are taken directly from the immediate value entered for P2LLVM, while flexspin wants a symbol or target address in order to compute its relative branch amount. If you enter an immediate it will just use that as the branch address amount in P2LLVM and it won't encode the same as flexspin which will take the number provided and figure out the distance from the current address to that address target and encode that value (unless the \ prefix is added, in which case it will use the address directly). It might be good to bring P2LLVM parser in line with how flexspin behaves by default at least when labels are not used as branch targets, or perhaps flexspin is doing it wrong.

2) Some aliases are being incorrectly matched when immediates are being used. For example: NOT D,#2 can incorrectly be matched as NOT D wc (alias form) because the #2 immediate S-operand in the NOT D, #S form is the same value as the encoded WC flag. It looks like effects flag operands need to have their own type and not get encoded as immediates in order for the aliases to work in all situations. For now until that is resolved (which needs additional effort) I'll just disable the affected aliases. I think it's this list of aliases NOT ABS NEG NEGC NEGNC NEGZ NEGNZ TEST ENCOD ONES

One other thing that I found is that the percentage symbol does indeed let you enter binary constants as it should after I'd enabled Motorola mode in the lexing. The $ prefix was working for hex but % was not working for binary when I tested it earlier. Turns out this was just because inline assembly also uses % as an escape character. To get the real % you need to use %%, although this is only needed for the inline assembler. The regular assembler that works with separate .s files only need a single % for binary number encoding (or can also use the 0b prefix, similar to 0x for hex). You can see both these prefixes now working in the captured log below. Unfortunately I don't think we can readily make the %% prefix represent two-bit quaternary numbers (base 4) as this is buried deep in the common lexing code used by all microprocessor targets which I certainly don't want to mess with. But as it's rarely used and we can easily convert those values into binary instead it shouldn't be a major deal to not have that feature implemented in P2LLVM.

It most likely is useful but last time I wanted to use it I think I had issues with requiring some extra python software module that apparently collide with my Mac's own versions when I tried to install them. So I stopped there.

It's pretty amazing what can be done with this LLVM toolchain if you mess about with it enough. By fighting/arguing/persevering with a google AI for several hours I was finally able to get the disassembly listing to produce the labels needed for branch targets. OMG that was a whole process in itself, but it finally appears to be working.

In the listing it is now making labels for each branch target in HUBRAM and referenced address in the code and automatically printing them after the tjz and jmp instructions. I've still got my own comment string being printed alongside that shows the target address and was used to help validate things but that is sort of redundant now. Relative branch addressing seems correct too.

I was also hoping to enable this --visualize-branches ASCII art feature that would look something like this, but it apparently came in on a later version of the toolchain and is not supported by P2LLVM. P2LLVM is based off LLVM 14.

ps: Still don't like the pattern above with a TJZ falling through after the test by skipping over the following JMP. If the target is close enough it should be able to just do the reverse test condition and only branch with a single instruction and continue through otherwise avoiding a branch penalty in one of the cases. That's another optimization that needs to be added in time. There's plenty of others too including a conditional branch without the prior compare operation to set flags, by instead setting Z directly off the memory reads, ALU operations. It also avoids TJZ since you can do if_z JMP xxx and purely use absolute addressing (for this external memory scheme I have in mind).

Was able to fix the alias mismatching problem mentioned earlier by creating a new type for the WC/WZ/WCZ effects flags instead of treating it like an immediate. I think that is sorted now. Once I've tested all the new instructions I'll do the GitHub thing.

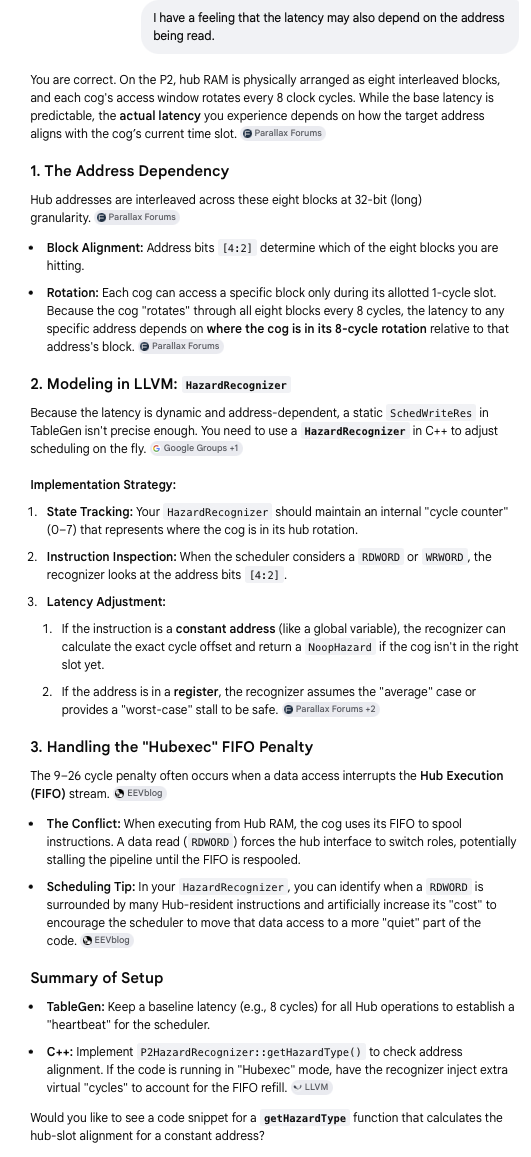

I also keep thinking about other possible P2 specific optimizations as I know there is scope for a lot of improvements. By asking an AI about it, there could be potential for hub scheduling improvements with LLVM which would be good. Here's a snippet of a conversation I had with this AI regarding hubexec memory access and pipelines, offering suggestions to me.

Point 3 is plain wrong. A data access can't interrupt the FIFO because the FIFO always has priority to hubRAM. Second, the FIFO stays well ahead of the cog's needs so any data accesses will never stall execution due to FIFO empty.

The FIFO can interrupt a data access though. FIFO reads of hubRAM are done in bursts of 6 longwords at a time - after initial filling that is. It would be difficult to predict I suspect.

As for state tracking of the data addresses, the biggest win is not in the absolute address but in the address delta of two accesses vs execution time.

Ok, I see the point you are making @evanh . The 9-26 cycle time for a memory read during hub exec is not really the RDxxxx doing its read causing a FIFO stall which needs reloading after the RDxxxx operation completes, but it's the intermittent FIFO accesses delaying the RDxxxx for a burst of 6 longs, at which point the read finally gets its go, but also has to wait for the hub rotation to align to its address after that (and maybe another FIFO read burst...?). 26 cycles worst case is up to 10 more than normal in COGEXEC mode, perhaps that accounts for more frequent FIFO access (immediately after a branch stall for example).

I can see it's far more complex to model that. I would hope there is some scope for improvements though if it can work out the amount of time between two accesses to allocate to non HUB instructions in between (at least when it knows the addresses). It could know the address delta to be zero if it does a read, a modify and write back to the same variable in memory which is a common thing to do in C, either on a global data item or a local in the current stack frame.

One other thing I was asking it about was using SKIP to effectively "jump" over sequential blocks of code vs the branch penalty in hub exec. That one seems quite doable, as a last step optimization once all instructions are fixed in place. Branches take 13-20 clocks in hub exec so we can get up to 5 instructions jumped via a SKIP instead of a conditional short JMP and still gain clocks in all cases. Only applies to forward jumps of course.

@rogloh said:

One other thing I was asking it about was using SKIP to effectively "jump" over sequential blocks of code vs the branch penalty in hub exec. That one seems quite doable, as a last step optimization once all instructions are fixed in place. Branches take 13-20 clocks in hub exec so we can get up to 5 instructions jumped via a SKIP instead of a conditional short JMP and still gain clocks in all cases. Only applies to forward jumps of course.

I did that, hand crafted of course, to gain myself a third execution condition in sdsd.cc's block read routine. It contains limited branches - A subroutine for CRC processing and the data block count loop were it. C flag used to remember if start-bit found. Z flag used to remember if CRC passed. And skipping used for first and last data block handling because CRC processing is buffered/lagged into the following block.

I suppose that's actually four conditions. The main idea was to optimise worse case execution times the most. The only niggle I had was it meant adding two SKIPF instructions and two skip patterns.

@rogloh said:

... It could know the address delta to be zero if it does a read, a modify and write back to the same variable in memory which is a common thing to do in C, either on a global data item or a local in the current stack frame.

@rogloh said:

Ok, I see the point you are making @evanh . The 9-26 cycle time for a memory read during hub exec is not really the RDxxxx doing its read causing a FIFO stall which needs reloading after the RDxxxx operation completes, but it's the intermittent FIFO accesses delaying the RDxxxx for a burst of 6 longs, at which point the read finally gets its go, but also has to wait for the hub rotation to align to its address after that (and maybe another FIFO read burst...?). 26 cycles worst case is up to 10 more than normal in COGEXEC mode, perhaps that accounts for more frequent FIFO access (immediately after a branch stall for example).

The +10 will be 8 for the extra rotation plus one if a word misalign ... plus one for something else.

Yeah, hubexec is always going to be a little erratic. But still predictable enough to make general optimising gains.

@rogloh said:

Ok, I see the point you are making @evanh . The 9-26 cycle time for a memory read during hub exec is not really the RDxxxx doing its read causing a FIFO stall which needs reloading after the RDxxxx operation completes, but it's the intermittent FIFO accesses delaying the RDxxxx for a burst of 6 longs, at which point the read finally gets its go, but also has to wait for the hub rotation to align to its address after that (and maybe another FIFO read burst...?). 26 cycles worst case is up to 10 more than normal in COGEXEC mode, perhaps that accounts for more frequent FIFO access (immediately after a branch stall for example).

The +10 will be 8 for the extra rotation plus one if a word misalign ... plus one for something else.

Yeah, hubexec is always going to be a little erratic. But still predictable enough to make general optimising gains.

Maybe something that can be achieved here is to simply assume that the FIFO has filled already or won't otherwise interrupt the current RD (because the best case for HUBEXEC timing is still the same as for COGEXEC which means no FIFO access collided), and when we are writing back to the same address we just read from, then use a specific number of instructions between the RD and the next WR to that address. Or a similar approach if it RD's another item at some known address offset from a previous read. I know it won't always apply but if it applied often enough it may still give us some gains where other instructions can be executed in the wasted waiting time.

Great minds think alike! Although this was an easy one. It would also be easy to check too if simple relative jumps are being used. Just hunt for very fixed JMP #1, JMP #2 .. JMP #5 encodings (relative offsets shown), preserve the condition code and replace with an associated SKIP #mask in a last pass optimization when all instruction's addresses are locked in. Nothing gets shifted in memory, and any conditional branching would be preserved. Any given branch path should be able to work independently with their own associated SKIPs as the path taken through the code won't have to overlap/recurse the SKIPs in any way no matter what path is taken, they'll simply always be sequentially executed. It would work for JMPREL too in immediate form, while the register form is something I'm looking into for switch statement decoding...

In looking at the P2 code generated by the P2LLVM backend for MicroPython I am occasionally seeing some cases like this where JMP #4 is issued and would be a candidate for the conversion to SKIP mentioned above, however it appears that the code is not doing the best thing here which is to place a single conditional JMP instruction and fall through to the LBB9_10 label otherwise.

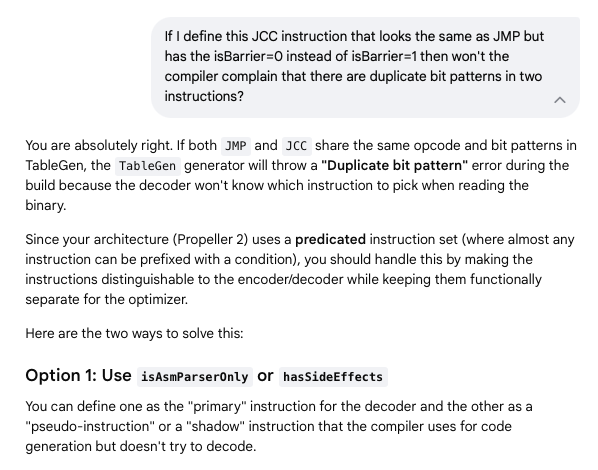

Because the conditional jump is shared with the unconditional jump instruction (only the IF_Z condition is different) the "isBarrier" value being set to 1 for both cases means the compiler will not let the execution fall through, and it will enforce the two jumps. We really need two "instructions" defined for JMP, one with isBarrier set to 1 and the other with it set to 0. It's a bit of work to change, but it probably should be done at some point to eliminate this inefficient double jump combo, although a SKIP replacing this JMP #4 would really help alleviate it also in the interim. Ideally it gets fixed the proper way, and I'm hoping it resolves the label generation for fall through cases so the extra blank lines and labels printed per function don't get issued - having all the extra blank lines in a function only seems to confuse things when reading through the code.

That's exactly what I want it to do. I'll try the change to create a conditional JMP branch instruction. My only concern is that it will have the same bit pattern as the regular jump (because conditions are specified separately) and there'll be a collision in the opcode generated when it tries to build the list of instruction encodings and sees two the same...and giggle AI says the same when I queried it, although it now has some suggested workarounds.

Comments

Yeah was waiting to see if he's lurking about. Might have to figure out GitHub again - been ages since I pushed stuff there.

BTW am now adding AUGD and AUGS constant value printing (where consumed) so we can see the full 32 bit values after augmenting. We can't show the full AUG values in the AUGD/AUGS statements themselves because the instruction that consumes it follows later and has not been processed yet. I also still want to show the original unaugmented value so this code can be re-assembled if you generate it without the addresses and that's why the final augmented value is shown only after the comment starts. Hopefully we can ultimately lookup any matching symbol names at these address values too. That can help to figure out some data variable accesses when memory is read or written there.

9c: 80 03 00 ff augs #$70000 >> 9 a0: 00 f0 07 f6 mov ptra, #0 ' ##$70000 a4: 0c 03 00 ff augs #$61800 >> 9 a8: 34 a1 07 f6 mov r0, #$134 ' ##$61934 ac: d0 a5 1b f2 cmp r2, r0 wcz b0: 04 00 90 cd if_c jmp #$4 ' <$b8> b4: 0c 00 90 fd jmp #$c ' <$c4>Update: there is just one more missing item that the decoding of AUGS/AUGD stuff will also assist. The special augmented and unaugmented hub memory accesses using PTRx pointer index operations like:

mov r0, ptra[##$2A332]andmov r0, ptra[123]Right now these are not yet handled at all, either with augmented constants or without. I should add these which I think will complete the P2LLVM parser to accept all standard P2 instructions and aliases, although compile time expressions for calculating constants may still be limited - I've not looked into that.

The special SPIN2 language control directives like ORGH, FIT, RES etc won't be implemented though, as this assembler already uses its own (primarily GNU GAS compatible) directives.

@rogloh,

To put your changes up and make a pull request would mean you have to fork a copy, do a pull request against that copy, do the submodule updates, copy the changes in, do a push. That would make a version that we could pull down and test.

Mike

Thanks @iseries for the refresher. I'll probably do exactly that in the end. I just want to complete the last part to parse which is the pointer indexing stuff. Then I should try the pull request. One good thing is that nothing seems to have changed since I dragged my tree down in the first place so no additional merging needs to be done at this stage.

@rogloh glad thinking about putting on GitHub later…

Would be nice to have build instructions for Linux if possible. Guess my problem might be that Linux people are so used building stuff they don’t need much help whereas I’m mostly lost…

Also, don’t know if it can work here but some GitHub stuff appears to be automagically compiled be them if set up (?)

@rogloh Suppose if spin2cpp still works, then can add spin2 drivers to c/c++ code right?

Potentially, although you'd need to worry about memory layout and startup code etc. Not sure they'll be fully compatible at that level without changes in how you structure and launch the bundled application code. But it's a compelling solution if we could use both toolchains together, particularly for something like MicroPython which could make use of other SPIN2 based objects converted into C code.

Got a bit further on this and tested all the opcodes for the new P2 instructions I'd recently added to P2LLVM by comparing all the different possible {#}D/{#}S forms with the binary output that flexspin generates. This is the list:

JMPREL LOC CALLD TJS TJNS TJV REP TEST TESTN CALLB BITxxx DIRxxx OUTxxx FLTxxx DRVxxx TESTP TESTPN TESTB TESTBN ALTR ALTB ALTI SETR SETS SETD COGATN XZERO CRCBIT CRCNIB WAITxxx SKIP SKIPF EXECF PUSH POP ADDPIX MULPIX BLNPIX MIXPIX ALLOWI STALLI TRGINT1 TRGINT2 TRGINT3 NIXINT1 NIXINT2 NIXINT3 ALTSN ALTGN ALTSB ALTGB ALTSW ALTGW SETLUTS SETCY SETCI SETCQ SETCFRQ SETCMOD SETPIV SETPIX SETPAT SETDACS GETXACC INCMOD DECMOD FBLOCK SETSCP SETINT1 SETINT2 SETINT3 GETPTR GETSCP CMPM CMPSUB RFVAR RFVARS SCA SCAS MUXC MUXNC MUXZ MUXNZ NEGC NEGNC NEGZ NEGNZ RCZR RCZL JINT JCT1 JCT2 JCT3 JSE1 JSE2 JSE3 JSE4 JPAT JFBW JXMT JXFI JXRO JXRL JATN JQMT JNINT JNCT1 JNCT2 JNCT3 JNSE1 JNSE2 JNSE3 JNSE4 JNPAT JNFBW JNXMT JNXFI JNXRO JNXRL JNATN JNQMT CALLPA CALLPBFound a typo and fixed the SETDACS opcode before it became buried and would cause problems later. But one error wasn't too bad given this was a manual process typing it all in.

I did find a couple of things with the P2LLVM output that worked differently when I assembled the same file using flexspin and compared the outputs.

1) Relative branch distances are taken directly from the immediate value entered for P2LLVM, while flexspin wants a symbol or target address in order to compute its relative branch amount. If you enter an immediate it will just use that as the branch address amount in P2LLVM and it won't encode the same as flexspin which will take the number provided and figure out the distance from the current address to that address target and encode that value (unless the \ prefix is added, in which case it will use the address directly). It might be good to bring P2LLVM parser in line with how flexspin behaves by default at least when labels are not used as branch targets, or perhaps flexspin is doing it wrong.

2) Some aliases are being incorrectly matched when immediates are being used. For example:

NOT D,#2can incorrectly be matched asNOT D wc(alias form) because the #2 immediate S-operand in theNOT D, #Sform is the same value as the encoded WC flag. It looks like effects flag operands need to have their own type and not get encoded as immediates in order for the aliases to work in all situations. For now until that is resolved (which needs additional effort) I'll just disable the affected aliases. I think it's this list of aliasesNOT ABS NEG NEGC NEGNC NEGZ NEGNZ TEST ENCOD ONESOne other thing that I found is that the percentage symbol does indeed let you enter binary constants as it should after I'd enabled Motorola mode in the lexing. The $ prefix was working for hex but % was not working for binary when I tested it earlier. Turns out this was just because inline assembly also uses % as an escape character. To get the real % you need to use %%, although this is only needed for the inline assembler. The regular assembler that works with separate .s files only need a single % for binary number encoding (or can also use the 0b prefix, similar to 0x for hex). You can see both these prefixes now working in the captured log below. Unfortunately I don't think we can readily make the %% prefix represent two-bit quaternary numbers (base 4) as this is buried deep in the common lexing code used by all microprocessor targets which I certainly don't want to mess with. But as it's rarely used and we can easily convert those values into binary instead it shouldn't be a major deal to not have that feature implemented in P2LLVM.

❯ cat test.s .text mov r0, #%10101 mov r0, #$110 ❯ cl -c -o test.o test.s ❯ objd -d --print-imm-hex test.o test.o: file format elf32-p2 Disassembly of section .text: 00000000 <.text>: 0: 15 a0 07 f6 mov r0, #$15 4: 10 a1 07 f6 mov r0, #$110 ❯@rogloh Is the test suite of any use?

https://github.com/ne75/p2llvm/tree/master/test/src

It most likely is useful but last time I wanted to use it I think I had issues with requiring some extra python software module that apparently collide with my Mac's own versions when I tried to install them. So I stopped there.

It's pretty amazing what can be done with this LLVM toolchain if you mess about with it enough. By fighting/arguing/persevering with a google AI for several hours I was finally able to get the disassembly listing to produce the labels needed for branch targets. OMG that was a whole process in itself, but it finally appears to be working.

In the listing it is now making labels for each branch target in HUBRAM and referenced address in the code and automatically printing them after the tjz and jmp instructions. I've still got my own comment string being printed alongside that shows the target address and was used to help validate things but that is sort of redundant now. Relative branch addressing seems correct too.

I was also hoping to enable this --visualize-branches ASCII art feature that would look something like this, but it apparently came in on a later version of the toolchain and is not supported by P2LLVM. P2LLVM is based off LLVM 14.

2a5c: 04 de 97 fb tjz r31, <bb_mp_print_str_1> --+ 2a60: d3 a1 03 fb rdlong r0, r3 | 2a64: 04 a6 07 f1 add r3, #4 | 2a68: d3 a7 03 fb rdlong r3, r3 | 2a6c: 2e a6 63 fd calla r3 | 2a70: <bb_mp_print_str_1>: <-+ 2a70: d2 df 03 f6 mov r31, r2ps: Still don't like the pattern above with a TJZ falling through after the test by skipping over the following JMP. If the target is close enough it should be able to just do the reverse test condition and only branch with a single instruction and continue through otherwise avoiding a branch penalty in one of the cases. That's another optimization that needs to be added in time. There's plenty of others too including a conditional branch without the prior compare operation to set flags, by instead setting Z directly off the memory reads, ALU operations. It also avoids TJZ since you can do

if_z JMP xxxand purely use absolute addressing (for this external memory scheme I have in mind).Was able to fix the alias mismatching problem mentioned earlier by creating a new type for the WC/WZ/WCZ effects flags instead of treating it like an immediate. I think that is sorted now. Once I've tested all the new instructions I'll do the GitHub thing.

I also keep thinking about other possible P2 specific optimizations as I know there is scope for a lot of improvements. By asking an AI about it, there could be potential for hub scheduling improvements with LLVM which would be good. Here's a snippet of a conversation I had with this AI regarding hubexec memory access and pipelines, offering suggestions to me.

Point 3 is plain wrong. A data access can't interrupt the FIFO because the FIFO always has priority to hubRAM. Second, the FIFO stays well ahead of the cog's needs so any data accesses will never stall execution due to FIFO empty.

The FIFO can interrupt a data access though. FIFO reads of hubRAM are done in bursts of 6 longwords at a time - after initial filling that is. It would be difficult to predict I suspect.

As for state tracking of the data addresses, the biggest win is not in the absolute address but in the address delta of two accesses vs execution time.

Ok, I see the point you are making @evanh . The 9-26 cycle time for a memory read during hub exec is not really the RDxxxx doing its read causing a FIFO stall which needs reloading after the RDxxxx operation completes, but it's the intermittent FIFO accesses delaying the RDxxxx for a burst of 6 longs, at which point the read finally gets its go, but also has to wait for the hub rotation to align to its address after that (and maybe another FIFO read burst...?). 26 cycles worst case is up to 10 more than normal in COGEXEC mode, perhaps that accounts for more frequent FIFO access (immediately after a branch stall for example).

I can see it's far more complex to model that. I would hope there is some scope for improvements though if it can work out the amount of time between two accesses to allocate to non HUB instructions in between (at least when it knows the addresses). It could know the address delta to be zero if it does a read, a modify and write back to the same variable in memory which is a common thing to do in C, either on a global data item or a local in the current stack frame.

One other thing I was asking it about was using SKIP to effectively "jump" over sequential blocks of code vs the branch penalty in hub exec. That one seems quite doable, as a last step optimization once all instructions are fixed in place. Branches take 13-20 clocks in hub exec so we can get up to 5 instructions jumped via a SKIP instead of a conditional short JMP and still gain clocks in all cases. Only applies to forward jumps of course.

I did that, hand crafted of course, to gain myself a third execution condition in sdsd.cc's block read routine. It contains limited branches - A subroutine for CRC processing and the data block count loop were it. C flag used to remember if start-bit found. Z flag used to remember if CRC passed. And skipping used for first and last data block handling because CRC processing is buffered/lagged into the following block.

I suppose that's actually four conditions. The main idea was to optimise worse case execution times the most. The only niggle I had was it meant adding two SKIPF instructions and two skip patterns.

Yes, this would be a good case to work on.

The +10 will be 8 for the extra rotation plus one if a word misalign ... plus one for something else.

Yeah, hubexec is always going to be a little erratic. But still predictable enough to make general optimising gains.

Previously: https://github.com/totalspectrum/spin2cpp/issues/181

Maybe something that can be achieved here is to simply assume that the FIFO has filled already or won't otherwise interrupt the current RD (because the best case for HUBEXEC timing is still the same as for COGEXEC which means no FIFO access collided), and when we are writing back to the same address we just read from, then use a specific number of instructions between the RD and the next WR to that address. Or a similar approach if it RD's another item at some known address offset from a previous read. I know it won't always apply but if it applied often enough it may still give us some gains where other instructions can be executed in the wasted waiting time.

Great minds think alike! Although this was an easy one. It would also be easy to check too if simple relative jumps are being used. Just hunt for very fixed

Although this was an easy one. It would also be easy to check too if simple relative jumps are being used. Just hunt for very fixed

JMP #1,JMP #2..JMP #5encodings (relative offsets shown), preserve the condition code and replace with an associatedSKIP #maskin a last pass optimization when all instruction's addresses are locked in. Nothing gets shifted in memory, and any conditional branching would be preserved. Any given branch path should be able to work independently with their own associated SKIPs as the path taken through the code won't have to overlap/recurse the SKIPs in any way no matter what path is taken, they'll simply always be sequentially executed. It would work forJMPRELtoo in immediate form, while the register form is something I'm looking into for switch statement decoding...In looking at the P2 code generated by the P2LLVM backend for MicroPython I am occasionally seeing some cases like this where JMP #4 is issued and would be a candidate for the conversion to SKIP mentioned above, however it appears that the code is not doing the best thing here which is to place a single conditional JMP instruction and fall through to the LBB9_10 label otherwise.

00001520 <.LBB9_8>: 1520: 01 c0 07 f1 add r16, #1 1524: d4 c1 5b f2 cmps r16, r4 wcz 1528: 04 00 90 5d if_nz jmp #4 <.LBB9_10> ' <$1530> 152c: 34 00 90 fd jmp #52 <.LBB9_11> ' <$1564> 00001530 <.LBB9_10>: 1530: e0 c5 03 f6 mov r18, r16 1534: 02 c4 47 f0 shr r18, #2I've tracked it down to these definitions:

// Patterns for various branch conditions and types of branch instructions // unsigned comparison // unconditional jump def : Pat<(br bb:$target), (JMP bb:$target, 1, always)>; def CMPrr : P2InstCZIDS<0b0010000, 0b0, (outs P2Implicit:$cmp), (ins P2GPR:$d, P2GPR:$s), "cmp\t$d, $s">; def : Pat<(brcc SETUEQ, P2GPR:$lhs, P2GPR:$rhs, bb:$target), (JMP bb:$target, (CMPrr P2GPR:$lhs, P2GPR:$rhs, always, wcz), if_z)>; let DecoderMethod = "DecodeJumpInstruction", isBarrier = 1, isBranch = 1, isTerminator = 1 in { def JMP : P2InstRA<0b1101100, 0b1, (outs), (ins reljmptarget:$d, P2Implicit:$cmp), "jmp\t$d">; // relative address jump def JMPa : P2InstRA<0b1101100, 0b0, (outs), (ins absjmptarget:$d, P2Implicit:$cmp), "jmp\t$d">; // absolute address jump. }Because the conditional jump is shared with the unconditional jump instruction (only the IF_Z condition is different) the "isBarrier" value being set to 1 for both cases means the compiler will not let the execution fall through, and it will enforce the two jumps. We really need two "instructions" defined for JMP, one with isBarrier set to 1 and the other with it set to 0. It's a bit of work to change, but it probably should be done at some point to eliminate this inefficient double jump combo, although a SKIP replacing this JMP #4 would really help alleviate it also in the interim. Ideally it gets fixed the proper way, and I'm hoping it resolves the label generation for fall through cases so the extra blank lines and labels printed per function don't get issued - having all the extra blank lines in a function only seems to confuse things when reading through the code.

That one is better served by changing the conditional execution to the second branch and then removing the first branch entirely.

00001520 <.LBB9_8>: 1520: 01 c0 07 f1 add r16, #1 1524: d4 c1 5b f2 cmps r16, r4 wcz 1528: 34 00 90 ad if_z jmp #52 <.LBB9_10> ' <$1560> 152c: e0 c5 03 f6 mov r18, r16 1530: 02 c4 47 f0 shr r18, #2That's exactly what I want it to do. I'll try the change to create a conditional JMP branch instruction. My only concern is that it will have the same bit pattern as the regular jump (because conditions are specified separately) and there'll be a collision in the opcode generated when it tries to build the list of instruction encodings and sees two the same...and giggle AI says the same when I queried it, although it now has some suggested workarounds.

Yeah, follow ARM solutions. Conditional execution is same arrangement there.